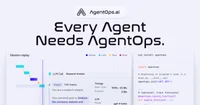

AgentOps

AgentOps is a monitoring and evaluation platform that tracks, analyzes, and improves the performance, reliability, and behavior of AI agents in production environments.

AgentOps is an observability and evaluation platform designed specifically for AI agents and autonomous workflows. It helps teams understand, debug, and improve complex agent behavior by capturing detailed execution traces, metrics, and outcomes across multi-step interactions. The primary purpose of AgentOps is to give developers clear visibility into how agents make decisions, where they fail, and how to systematically improve reliability and performance over time.

AgentOps provides granular session tracing, including step-by-step actions, tool calls, model responses, and state transitions, enabling precise diagnosis of issues in production or during development. It supports automated evaluation of agent runs using configurable metrics, custom tests, and scenario-based benchmarking, making it easier to compare prompts, models, and orchestration strategies. The platform includes dashboards for monitoring latency, cost, error rates, and success criteria, as well as replay capabilities to inspect and iterate on problematic workflows. Integration is typically done via lightweight SDKs or API calls, allowing teams to instrument their existing agent frameworks with minimal changes.

Tags

Launch Team

Alternatives & Similar Tools

Explore 50 top alternatives to AgentOps

ElevenAgents

ElevenAgents is a platform for building, configuring, and deploying AI-powered voice agents for websites, mobile applications, and call centers.

Stream Chat AI

Stream Chat AI is a web-based tool that lets users chat with AI agents while watching Twitch streams, enabling context-aware assistance and interaction based on live content.

Comments (0)

Please sign in to comment

💬 No comments yet

Be the first to share your thoughts!